Simpsons Say

A Natural Language Processing search engine using Term Frequency - Inverse Document Frequency (TF-IDF) and k-Nearest Neighbors (k-NN).

After several months of working mostly alone through a combination of crash-course materials in Machine Learning and reviewing Stats and Linear Algebra, I was ready to team up with a handful of my friends and apply what I was learning to something fun. I figured out pretty quickly that I was speaking an entirely different language than my web-dev buddies; I had primarily been living in the temperate climate of Python, and they had been lounging on the beaches of JavaScript. We had to start by talking in broad-strokes. We decided to build something to do with the Simpsons, and as it turned out transcripts for all 673 episodes were readily available for people like use to play with.

Our idea was a web app that targeted Simpsons fans. As a baseline, we could build some kind of a quote finder. The user could give the gist of a something said on a Simpsons episode and our search engine would produce the most similar Simpsons quotes - something like how Soundhound finds songs when a user hums their best impression of a song they can't get out of their head

At this point, I had about two days of exposure to Natural Language Processing (NLP), and I had no idea how to get my model to interact with our web app being developed. I hadn't really thought about what an API is until I started this project. After about a day of stumbling through Google searches and fumbling my way through stack-overflow posts, it became apparent that I was going to have to figure it out - I needed an API to feed the results of my model, written in Python, over to my web developers. I'd built a couple flask web apps. Building a flask api was not terribly different - instead of a html front end, the routes in the flask app serve as End Points to connect to the web folk. My model would serve up results in JSON format when a Get request was sent to our flask API.

Natural Language Processing

The first step to applying any mathematical processing to a collection of words is to tokenize them. We identify which blobs of characters represent a word disregarding capitalization and punctuation and transform all those carefully composed sentences and paragraphs into a list of words separated by commas. Then we can throw out 'stopwords' - words that are not particularly helpful in determining what makes a document unique. If we are comparing collections of words, then the qualities which makes one document different from another can be viewed not only as the word counts, but the subtle mapping of these words. In this project I used spaCy to begin digging into these subtle word maps. Among many other powerful tools not used here, I have used spaCy's list of stopwords in my tokenizing function.

First, I instantiated spaCy and borrowed a collection of stop words from it.

nlp = spacy.load('en_core_web_lg')

STOPWORDS = nlp.Defaults.stop_words.union({' ', ''})I created a tokenizing function that applies some basic regex, python string processing, and uses spacy to do the heavy lifting of the actual tokenization.

def tokenize(doc):

text = re.sub(r'[^a-zA-Z ]', '', doc)

text = text.lower()

tokenizer = spacy.tokenizer.Tokenizer(nlp.vocab)

tokens = tokenizer(text)

list_of_tokens = [t for t in tokens if (str(t) not in STOPWORDS) and (t.is_punct == False)]

return (list_of_tokens)SpaCy is a pretty big package and has to be run locally. At the beginning of this project, I made the mistake of trying to include it in my project's pip environment. Even alone without the other project models, it was far too big to be deployed. There are more compact versions of the spaCy package that might be added to the final pickled model to live in the deployed app. In this case, I simply used spaCy to tokenize my words and used another package to vectorize them and mess with the language mapping.

Before vectorizing any words, I integrated my tokenized words back into my dataframe, by creating a new column that applies my tonkenizing function above to each line from the Simpsons.

df['tokens'] = df['spoken_words'].apply(tokenize)

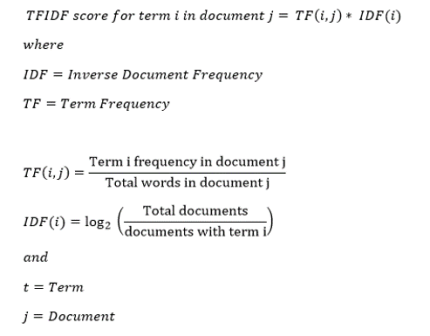

In order to do some powerful black-box-magic mathematical wizardry, I used Scikit-learn's TF-IDF vectorizer. A rudmentary way to vectorize a set of words is to simply count the occurrence of each word in a collection and compare it to other collections. These counts could be represented as a sparse matrix, mostly of zeros, counting the occurance of each particular word in a collection. With more wizardry, this sparse matrix can be crunched down into a "dense matrix" that preserves the same essential information about word counts, but is easier to push through ML models. What makes Term Frequency - Inverse Document Frequency (TF-IDF) unique is that it finds the most important words, or lemmas, for identifying what makes a document unique. It does so by penalizing the term frequencies of words that are common across all documents which allows for each document's most different topics to rise to the top.

Still exploring in my Jupyter notebook, I instantiate Scikit-learn's TF-IDF vectorizer.

from sklearn.feature_extraction.text import TfidfVectorizer

# Instantiate vectorizer object

tfidf = TfidfVectorizer(stop_words=STOPWORDS, lowercase=True, min_df=0.005, max_df=0.95)I then create a sparse matrix, convert it to a dense matrix, and convert the results into an inspect-able dataframe.

# Create a vocabulary and get word counts per document

sparse = tfidf.fit_transform(df['spoken_words'])

# Get feature names to use as dataframe column headers

dtm = pd.DataFrame(sparse.todense(), columns=tfidf.get_feature_names())

# View Feature Matrix as DataFrame

dtm.head()From here, applying a kNN model is as simple as instantiating and fitting it to the above dataframe:

# Instantiate kNN

from sklearn.neighbors import NearestNeighbors

# Fit on TF-IDF Vectors

nn = NearestNeighbors(n_neighbors=5, algorithm='ball_tree')

nn.fit(dtm)So, presumably it's working, but to see the results we have to create a quote to find similarity to:

in_text = 'got a lovely bunch of coconuts. there they are standing in a row'

# Query for similiar quotes...

new = tfidf.transform([in_text])

finder = nn.kneighbors(new.todense())This creates a Python object that we then look into. At position 1, this object reveals the position of the text in our original dataframe that our quote is similar to. So,

finder[1]drops us a list of indices for locating the top five most similar quotes according to the kNN model. The array looks like this:

array([[444, 891, 702, 277, 611]])We can filter our original dataframe using these numbers to find the most similar quotes to our input text. For example:

df['spoken_words'][891]The results of our kNN search from the above input text:

"Show me the thirty bucks, because if you ain't got it, I ain't gettin' off the stool."Not entirely convincing, so let's try something more familiar. This time, I'll grab the top five matches all at once.

in_text = 'don\'t have a frog man'

Top 5 Results:

'Don't be a fool, Aunt Selma. That man is scum.'

'No, Pie Man! Don't do it!'

'Now don't you pull that cord, young man!'

'Don't have a cow, man!'

'Please, FBI man, don't throw us in jail. We just made one mistake.'Flask API deployed on Heroku

The first step to building our model's API was to create a model.py file with a condensed version of the code above as a single function - taking input text and outputs one simulacra quote in JSON format. At some point, this big function needed to account for the possibility of a blank submission, for which the if/else statement accounts. Otherwise, the function below is a condensed version of the work above.

def get_quote(input_text):

'''

function to find most similar quote to input text

if input text is blank, a quote is chosen at random

row of dataframe containing similar quote is returned in json format

'''

if input_text == '':

# gets the index of a random quote in the DataFrame

rand_index = np.random.randint(len(df))

# gets the row containing the quote

quote_row = df.iloc[rand_index]

# converts output to json

json_output = quote_row.to_json()

return(json_output)

else:

# creates vector of input text

quote_vec = tfidf.transform([input_text])

# gets an object that contains the index values for similar quotes

similar_quotes = nn_model.kneighbors(quote_vec.todense())[1][0]

# generates a number to select out of the most similar quotes at random

i = np.random.randint(len(similar_quotes))

# gets the index value for the selected quote

similar_index = similar_quotes[i]

# gets the quote from the dataframe and returns it

quote_row = df.iloc[similar_index]

# converts output to json

json_output = quote_row.to_json()

return(json_output)The trick to making the flask app into an API was to create a route that served as an endpoint. The web side of the project sends a GET request in the form of input text. The retrieval function in this route plugs the input text into the get_quote function above and returns the JSON object.

@app.route('/input', methods=['GET'])

def retrieval():

try:

if request.method == 'GET':

text = request.args.get('input_text') # If no key then null

output = get_quote(text)

return output # This is now the input variable into the model

except Exception as e:

# Unfortunately I'm not going to wrap this in indv. strings

r = Response(response=error_msg+str(e),

status=404,

mimetype="application/xml")

r.headers["Content-Type"] = "text/json; charset=utf-8"

return rHomer quote generator using a neural network

While my web developer friends were figuring out how to hook up the kNN API to the front end of our search feature, along with finishing some of their own pet features, I began playing around with an idea for yet another feature that understandably never made the cut. It was, however, an entertaining venture, and I think it was fundamentally a good idea even if it's not quite ready for production.

The idea was to take the entire opus of Homer Simpson and train a neural network on it using NLP to try to make a Homer Quote Generator. It's not an entirely far-fetched idea. As I am writing this, I believe I may have figured out how to make it work better by using Scikit-learn to map words instead of charters as I did with the search feature. In any case, here's what I've done so far.

First I filtered my dateframe down to only Homer.

homer_opus = df[df['raw_character_text'] == 'Homer Simpson'].reset_index()I then chopped out the shorter quotes that were smaller than 64 characters - a little arbitrary, but it's a nice number.

homer_opus['spoken_words'] = homer_opus['spoken_words'].astype('str')

mask = (homer_opus['spoken_words'].str.len() > 64)

homer_opus = homer_opus.loc[mask]Next I took the dataframe and exported the whole of these filtered Homer quotes into a txt file as one big blob of text. Maybe I should have kept quotes in smaller units and buffered the end of each with zeros as I vectorized, but the blob method seemed easier to start out with. Instead of using spaCy and TFIDF to work with words, I decided to start by training the Neural Network from the level of individual characters. As I am writing this several months later, it is apparent what I should do to make this feature work if I can carve out the time to come back to it.

After converting all the text to lowercase and removing punctuation, I then numbered every character.

# create mapping of unique chars to integers

chars = sorted(list(set(raw_text)))

char_to_int = dict((c, i) for i, c in enumerate(chars))This reduced the 1.5 million or so characters of Homer's opus into a long string of 69 different numbers. Starting from left to right, I then took each successive window of 64 characters, including overlap, and added them to a list that I split into X and Y. I then normalized my X values and categorically encoded my target vector Y.

After a number of iterations, I settled into a relatively simple Keras model NN with only three layers built with dropout layers in between.

model = Sequential()

model.add(LSTM(256, input_shape=(X.shape[1], X.shape[2]), return_sequences=True))

model.add(Dropout(0.10))

model.add(LSTM(256))

model.add(Dropout(0.10))

model.add(Dense(y.shape[1], activation='softmax'))

model.compile(loss='categorical_crossentropy', optimizer='adam')After compiling my model, I fit it and ran 100 epochs - with batch sizes perhaps too small. Even with this relatively thin model, each epoch took around 3 minutes running on a P6000 Paperspace GPU. It took a stupid amount of time to get the loss to finally settle around 1.5. Unfortantly for me, I hadn't wrtten in early stopping, so I had to just watch it spin for a while even after reaching a sort of homeostasis. By the time I had my results, the five days to slap this project together was at an end, with no time left to refactor my approach to the problem.

My results are something at least vaguely resembling the English language. I built a function to look inside what I had made. The model grabs a random chunk of Homer text as a seed for the model to generate from.

# pick a random seed

start = np.random.randint(0, len(dataX)-1)

pattern = dataX[start]

print ("\"", ''.join([int_to_char[value] for value in pattern]), "\"")

# generate characters

for i in range(80):

x = np.reshape(pattern, (1, len(pattern), 1))

x = x / float(n_vocab)

prediction = model.predict(x, verbose=0)

index = np.argmax(prediction)

result = int_to_char[index]

seq_in = [int_to_char[value] for value in pattern]

sys.stdout.write(result)

pattern.append(index)

pattern = pattern[1:len(pattern)]I will leave my reader to decide where the seed ends and where the generator begins. It's really not bad considering the model started with individual characters. However, the model may not be ready for any kind of Turing test.

"nice to meet you. i had no idea. mr. burns, i think we can start the best friends in the world. i was the people who can do that"

- Michael Curry

- Data Scientist